The narrative surrounding artificial intelligence has shifted from the inherent opacity of neural networks to the systemic risks posed by how these models are deployed. While the internal decision-making process of a model remains a technical challenge, the orchestration layer—the infrastructure that connects models to enterprise data and external actions—is increasingly becoming a liability. Courts and regulators are moving toward a standard where the lack of transparency in automated workflows is no longer a defensible design choice.

Liability in Automated Orchestration

Organizations have long treated the orchestration layer as a proprietary black box to protect intellectual property and streamline complex workflows. This approach obscures how inputs are routed, which data sources are prioritized, and how final outputs are validated. As enterprises integrate these systems into critical business functions, the inability to audit the decision path creates a significant legal exposure. When an automated system makes a decision that results in financial or operational harm, the defense that the model is a black box will likely fail if the orchestration logic itself is undocumented or opaque.

Legal scrutiny is beginning to focus on the chain of custody for data within these pipelines. If a company cannot demonstrate the logic governing its AI agents, it faces challenges in meeting compliance standards related to data privacy and algorithmic fairness. The shift toward Implementation Physics suggests that the physical reality of how a system operates must be as transparent as the model itself. Companies that fail to map their orchestration logic are effectively inviting regulatory intervention and litigation risk.

The Shift Toward Auditability

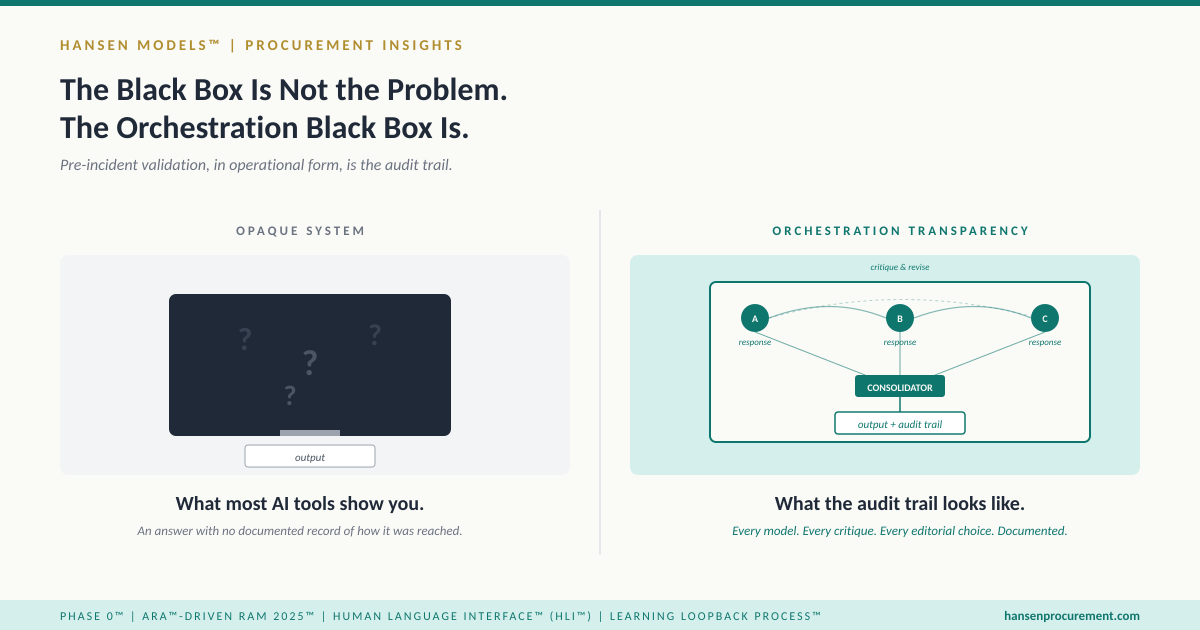

To mitigate these risks, firms must transition from opaque automation to observable orchestration. This requires a fundamental change in how AI systems are architected. The focus must move toward systems that provide clear, traceable logs of every interaction between the model and the enterprise environment. This level of visibility is essential for maintaining stock market analysis standards, as investors increasingly demand clarity on the operational risks associated with AI adoption.

- Standardizing the logging of decision-making triggers within the orchestration layer.

- Implementing human-in-the-loop validation for high-stakes automated actions.

- Developing rigorous documentation for data routing and model selection criteria.

These steps are not merely technical requirements but are necessary components of modern corporate governance. As seen in the broader Apple (AAPL) profile, the integration of sophisticated AI features into consumer and enterprise products necessitates a high degree of operational accountability. The market is beginning to differentiate between companies that view orchestration as a proprietary secret and those that view it as a transparent, auditable asset.

The Next Marker for Enterprise AI

The next concrete marker for this transition will be the emergence of standardized audit requirements for AI-driven workflows. As legal precedents solidify, the burden of proof will shift entirely to the deployer. Companies that have built their infrastructure on opaque orchestration will face significant costs to retrofit their systems for compliance. The immediate priority for leadership teams is to assess their current AI pipelines for observability. The era of claiming ignorance regarding the mechanics of automated orchestration is ending, and the cost of non-compliance will be measured in both legal penalties and diminished market trust.