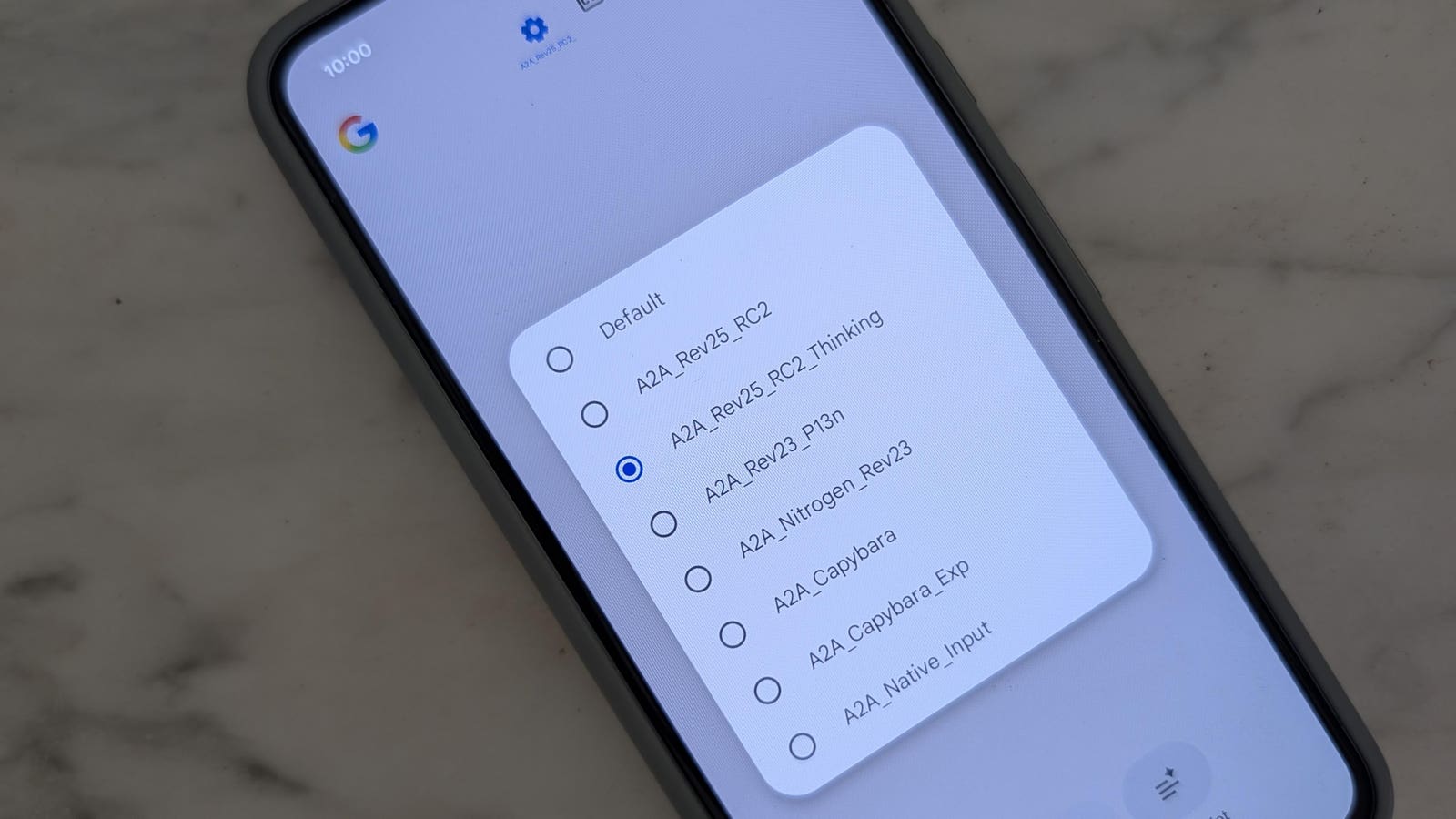

Seven previously unseen voice AI models are sitting inside the Google app, hidden behind a server-side flag and accessible only through a model selector menu that is still under development. The find, uncovered while investigating an unrelated feature, suggests Alphabet is test-driving a far broader Gemini Live lineup ahead of Google I/O 2026 on May 19. The consequence for shareholders is immediate: the event now carries a distinct product-cycle catalyst that the current consensus may not be pricing.

The seven model options include codenames Capybara, Nitrogen, Capybara Exp, P13n, an audio-to-audio A2A_Rev25_RC2, a Thinking variant with enhanced reasoning, and the existing Flash Live model. Two of those, the RC2 releases, appeared on the server on May 8, carrying the “Release Candidate 2” label that indicates production-readiness is close rather than months away.

Alphabet shares have not yet moved on the report. The model selector remains hidden from users and the user interface is unpolished. The market, however, now has a concrete set of product signals to weigh against a year in which voice AI monetization has been an open question for the stock.

I/O 2026 becomes a real-time risk event for the Gemini narrative

Google I/O is always a potential catalyst, yet this year the teardown changes the risk-reward setup. Instead of a generic keynote, investors can map specific capabilities to a potential tiered voice offering. The model selector is designed to add or remove models without an app update, a feature that makes a live demo at I/O technically trivial.

If Alphabet unveils the ability to switch between Fast, Thinking, and personalized voice models – or ties premium voice experiences to a subscription tier – the narrative flips from “voice AI as cost center” to “voice AI as ARPU driver.” Conversely, if the company presents only a minor update or delays public access, May 19 becomes a sell-the-news event for anyone who bid up expectations on these leaked details.

The exposure map

- Alphabet (GOOGL) is the primary name. Any direct monetization of Gemini Live through tiered pricing would lift revenue per user assumptions.

- Apple (AAPL) and Amazon appear as indirect beneficiaries or victims depending on whether Google’s voice models widen the gap in assistant quality.

- NVIDIA (NVDA) enters the read-through as the hardware supplier likely powering the inference clusters that serve seven distinct real-time audio models.

- Cloud providers that host competing voice APIs, such as Microsoft Azure, may face pricing pressure if Google makes always-on, personalized voice cheap at scale.

Timeline and execution risk

The code confirms that the model selector list is served server-side. Alphabet can switch models without an app update, meaning the feature could go live at any moment after I/O. The RC2 labelling points to near-final candidates, yet the user interface is not polished, and the company has not officially acknowledged the feature. That leaves a wide range of outcomes: full launch at I/O, a developer preview, or a test reserved for internal experimentation.

The voice AI models and what their test results reveal

Testing of the seven models by the source produced measurable behavioral differences. Those differences matter because they prove the company is not merely testing infrastructure but genuinely evaluating distinct assistant personalities and capability sets.

Location access splits the field

Four of the seven hidden models accessed the device’s location when asked for a live weather update. The remaining three prompted the user to provide a location first. That split implies Alphabet is deliberately calibrating how much user context voice models receive, perhaps because the most powerful configurations will require opt-in sharing or be reserved for paying subscribers.

Personalization marks a clear differentiator

The P13n model, a shortened form of personalization, did not assume the user’s time zone when asked for the date and time. It asked first. Later in the conversation it recalled personal details shared earlier and reused them naturally. In contrast, the current public Gemini Live model refused to reference any personal information already provided in the same session.

Three models overall promised to remember personal information when asked. Three others refused. This suggests a deliberate fork between models that feel like a persistent assistant and models that behave like a stateless query tool.

Identity and accuracy checks point to underlying model variations

When asked what model it was, Capybara identified itself as “Gemini 3.1 Pro” rather than the expected “Gemini 3.1 Flash Live.” Two models, including Nitrogen, challenged a deliberately false claim made during testing; the others accepted it without question. The variation implies distinct combinations of base models, fine-tuning, and guardrails rather than a single architecture with different names.

Key insight: Google is road-testing a spectrum of voice assistants with different levels of context, memory, and scepticism. That is the raw material for a tiered product strategy.

What the model suite means for Alphabet’s revenue path

Alphabet currently offers model selection in the text-based Gemini interface – Fast, Thinking, Pro – but Gemini Live sticks to a single model. Extending choice to voice chats would create a natural upgrade ladder. A user who wants a more thoughtful, personalized voice assistant could be routed to a higher-tier subscription, or the feature could be bundled with Google One to raise retention.

- A premium voice tier could command an incremental $5–$10 per month, directly lifting the subscription segment that delivered $10.8 billion in revenue last quarter.

- Enterprise Workspace customers could see differentiated voice models that handle meetings and scheduling, strengthening the stickiness of the productivity suite.

- Advertising tie-ins become clearer if personalization models recall user preferences and make real-time recommendations in voice exchanges.

None of this is priced with certainty. The code teardown simply removes the argument that the company has no roadmap beyond the current Flash Live implementation.

The risk: overhyped expectations and a minimal rollout

Alphabet has a history of showing experimental AI functions at I/O that later land in a limited geography, a single language, or a Pixel-exclusive release. The model selector infrastructure being in place does not guarantee a consumer-facing launch on May 19.

Risk to watch: the presentation delivers a controlled demo with no public access date, and the stock gives back any pre-I/O premium that built on the leak.

On the day, specific language will matter. If executives describe the capability as “available to trusted testers later this year,” the market will likely treat it as a long-dated option rather than a near-term revenue driver. A firm launch date or immediate availability for Gemini Advanced subscribers would have the opposite effect.

What would make the risk worse

- No mention of model selection at I/O, confirming the menu is purely internal testing.

- An announcement limited to a single new model with no tiered pricing.

- A Pixel-exclusive rollout that limits the addressable user base.

What would reduce the risk

- A clear subscription tier with model choice, live demos, and a launch date.

- Multi-language support that opens the feature to high-ARPU markets outside the US.

- Developer APIs that let third-party apps plug into the same voice model spectrum.

Next marker: Google I/O 2026 begins May 19

The keynote is one week from now. The model selector code suggests Alphabet can add or remove models without pushing an app update, which keeps the door open to a surprise reveal. The RC2 stability signals that the technical risk is lower than it would be for early experimental models.

For an Alphabet shareholder or options trader, the May 19 event is no longer a generic technology showcase. It is a binary checkpoint on whether voice AI becomes a monetizable product layer in the next 12 months. Any read-through to the stock’s forward price-to-earnings multiple will hinge on the specificity of the product roadmap that executives present.

The stock market’s current AI enthusiasm still flows predominantly to infrastructure providers and model trainers. A credible voice AI service launch from the company that owns Android and Chrome could redirect a portion of that attention – and the associated premium valuations – toward the application layer. The hidden model selector is not a guarantee. It is a trail of evidence that makes I/O 2026 a genuine risk event for the Geminii Live investment thesis.

FAST stock page does not share the same catalyst structure, and broader stock market analysis shows how AI product announcements have recently driven single-stock moves of 5% or more on event days. For traders who want exposure to the voice AI theme, choosing among the best stock brokers is a prerequisite to reacting quickly to the May 19 outcome.