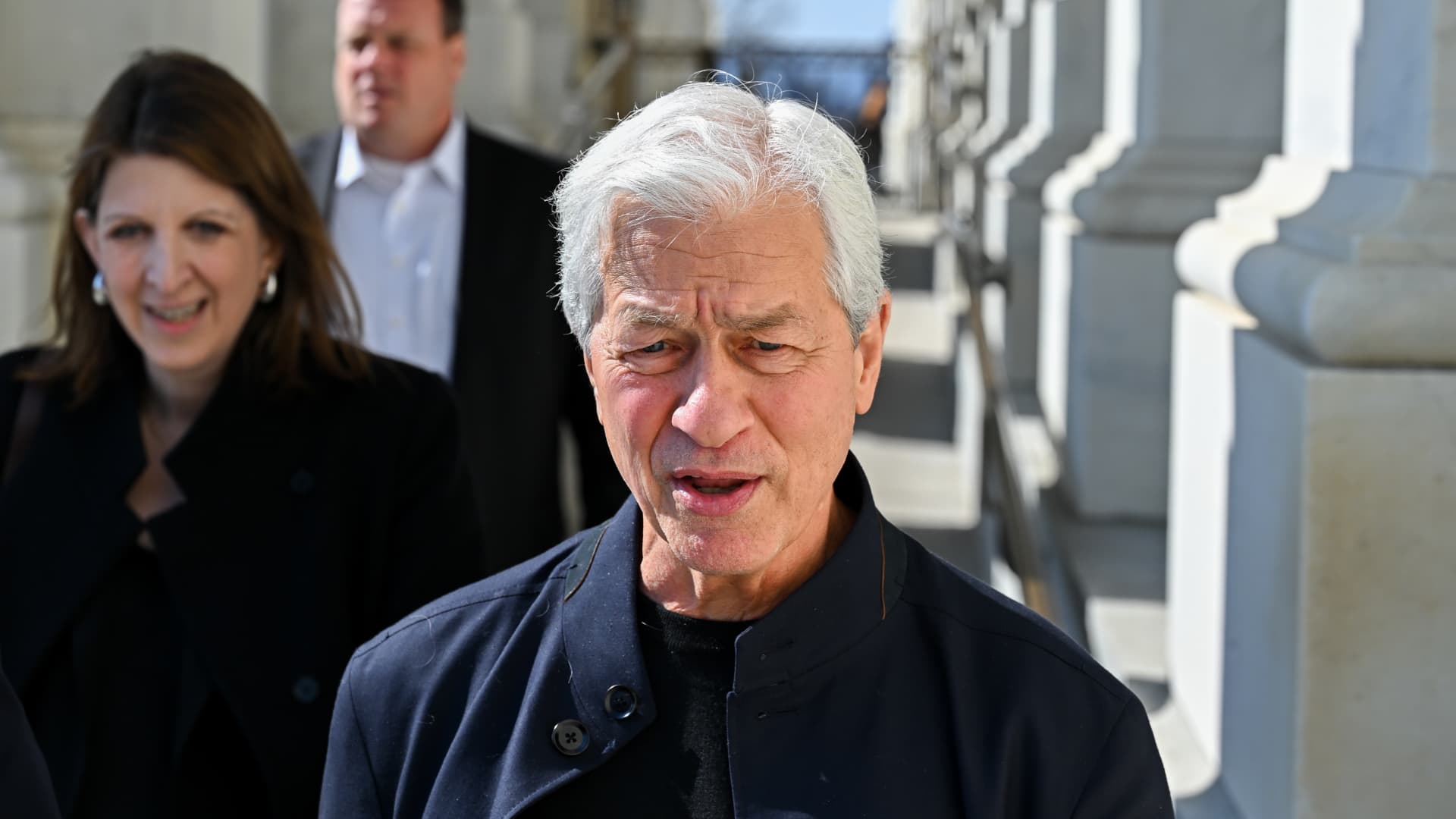

Jamie Dimon Warns Anthropic’s Mythos Model Exposes New Cybersecurity Risks

JPMorgan Chase CEO Jamie Dimon warns that the Anthropic Mythos model exposes significant new cybersecurity vulnerabilities, forcing firms to balance AI-driven productivity with heightened defensive risks.

The Double-Edged Blade of Enterprise AI

JPMorgan Chase CEO Jamie Dimon has issued a stark warning regarding the security implications of advanced artificial intelligence models. Speaking on the integration of new tools, Dimon focused on Anthropic’s Mythos, suggesting the model reveals a lot more vulnerabilities for potential cyberattacks than previously understood.

While major financial institutions have embraced generative AI to drive efficiency and productivity, the technology introduces a complex security surface. Dimon’s comments highlight a growing tension between the desire for rapid technological adoption and the reality of protecting sensitive institutional data from increasingly sophisticated digital threats.

Uncovering Systemic Weaknesses

Corporate leaders have long championed AI as a productivity boon. However, the specific capabilities of models like Mythos allow for a level of automated reconnaissance that was once manually intensive. By analyzing code and system architecture, these models can identify entry points that human developers might overlook.

"The remarks show how a technology welcomed by corporations as a productivity boon can also pose serious threats, like uncovering new ways to hack into systems."

This reality forces firms to reconsider their defensive posture. If a tool designed to streamline development can also map out a network's defenses, the traditional perimeter-based security model becomes less effective. Traders tracking market analysis should recognize that cybersecurity spending is likely to remain an elevated expense for the financial sector as firms scramble to patch these newly visible gaps.

Assessing the Threat Landscape

Security experts and bank executives are now evaluating how to contain these risks without stifling innovation. The primary concerns center on:

- Automated Vulnerability Discovery: AI models identifying software bugs at scale.

- Credential Harvesting: Enhanced phishing techniques powered by generative text.

- System Integrity: The risk of AI models being manipulated to provide incorrect or malicious code recommendations.

| Risk Factor | Potential Impact | Security Priority |

|---|---|---|

| Reconnaissance | High | Critical |

| Data Exfiltration | High | Critical |

| System Manipulation | Moderate | High |

Strategic Implications for Investors

Investors should keep a close eye on how large institutions, such as JPMorgan Chase (JPM), allocate capital toward defensive tech. As momentum investing persists, the market may start to penalize companies that fail to demonstrate a clear strategy for managing AI-driven security risks.

Looking ahead, the focus will be on the development of 'defensive AI.' If the same models being used to attack systems can be tuned to harden them, the industry might find a balance. Until then, the volatility surrounding tech-integrated financials could remain a feature of the current market cycle.